Industrial quality assurance has shifted from end-of-line inspection to something much closer to the production process itself. In many factories, defects are no longer something you simply catch and log, they are signals that need to be acted on immediately. This is where Edge AIEdge AI for industrial quality assurance is finding its place, running directly on inspection systems alongside cameras and sensors rather than relying on remote infrastructure.

The change is less about adopting new technology and more about aligning inspection with how modern production lines actually operate.

When line speeds increase and product variation becomes harder to control, delays in inspection (whether caused by manual checks or cloud-based processing) start to show up as waste, rework, or missed defects.

On a typical line, those delays are not theoretical. They show up as bins of rejected parts or batches that require re-inspection.

Where Edge AI for Industrial Quality Assurance Fits on the Line

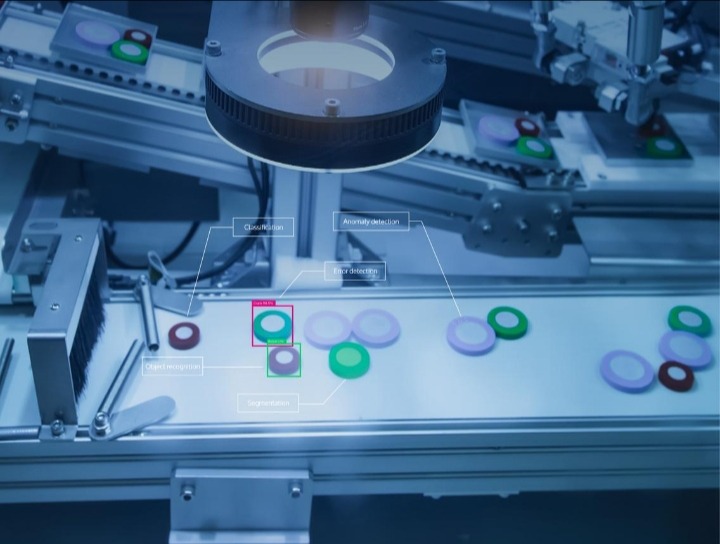

In practical terms, edge-based inspection systems sit directly at key points along the production line. These are usually stations where visual checks already exist: after assembly, before packaging, or at points where alignment and finishing are critical. Cameras capture images continuously, often under controlled lighting to reduce variability.

Instead of passing those images to a centralized system, models run locally on embedded hardware. Devices based on platforms like NVIDIA Jetson or Intel’s edge inference stack are commonly used because they can process high-throughput image data without introducing noticeable latency.

The output is immediate. A product is either accepted, rejected, or flagged for review. In many setups, that decision is passed directly to a reject mechanism or a programmable logic controller, allowing defective items to be removed before they move further down the line.

This is not a separate system layered on top of production. It becomes part of the production flow.

From Rule-Based Vision to Learned Inspection

Traditional machine vision systems depend on predefined rules: edge detection thresholds, template matching, or geometric measurements. These approaches work well when conditions are stable, but they struggle when products vary slightly or when environmental factors change.

Edge AI systems take a different approach. Instead of defining every acceptable condition, models are trained on examples of correct and defective outputs. Over time, they learn patterns that are difficult to encode manually, subtle surface inconsistencies, irregular textures, or slight misalignments.

This shift is particularly visible in high-precision industries such as electronics and automotive manufacturing, where inspection tolerances are tight and variability is difficult to eliminate entirely. Research into deep learning for visual inspection has shown strong performance in identifying defects that fall outside rigid rule-based definitions, as discussed in studies on deep learning in manufacturing inspection.

The difference becomes clear during production changes. When a new batch of materials behaves slightly differently, rule-based systems often require recalibration. Learned systems tend to adapt more gracefully, provided they have been trained on representative data.

Deployment Constraints Most Teams Underestimate

Running models at the edge introduces constraints that are easy to overlook during initial planning. Unlike cloud environments, edge devices have limited compute capacity, memory, and power budgets. Models must be optimized to run efficiently without introducing delays that could slow down inspection.

This is where frameworks such as ONNX Runtime and hardware-specific toolchains become important. Models are often compressed through quantization or pruning to meet performance requirements while maintaining acceptable accuracy.

Environmental conditions also play a significant role. Lighting inconsistencies, vibration from nearby equipment, and even minor shifts in camera positioning can affect model performance.

In practice, teams spend a considerable amount of time stabilizing data capture before they see consistent results from their models. Most issues in production are not caused by the model itself, but by the conditions it operates in.

Handling Defects That Do Not Have Labels

One of the limitations of supervised learning is the need for labeled defect data. In many production environments, especially new lines, there may not be enough examples of every possible defect to train a model effectively.

To address this, many systems incorporate anomaly detection techniques. Instead of learning every defect type, the model learns what normal output looks like and flags deviations. This approach is particularly useful for identifying rare or previously unseen issues.

Recent work in industrial anomaly detection highlights how models trained on normal samples can still achieve strong detection performance, even when defect data is scarce, as outlined in research on anomaly detection methods.

In practice, this reduces the dependency on large, curated defect datasets and allows inspection systems to be deployed earlier in the lifecycle of a product line.

Integration with Existing Control Systems

Factories rarely operate on greenfield infrastructure. Most production environments rely on established systems for control and monitoring, including PLCs, SCADA platforms, and manufacturing execution systems.

Any edge AI deployment needs to integrate with these components without disrupting existing workflows.

Communication standards such as OPC UA are often used to bridge this gap, allowing inspection results to be shared with control systems in a structured way.

The challenge is ensuring that decisions made by the AI system align with operational expectations, for example, how many false rejects are acceptable, or when a line should be stopped for investigation.

These are operational decisions, not purely technical ones.

Traceability and Data in Regulated Environments

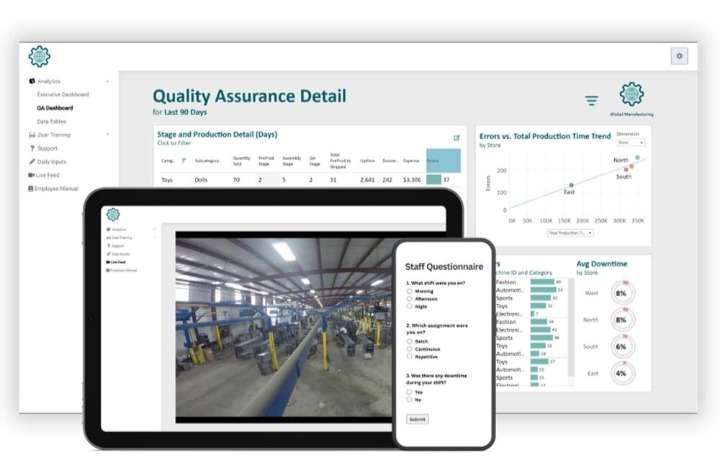

In industries such as pharmaceuticals and aerospace, inspection results are not only used for immediate decisions but also for long-term documentation. Edge AI systems can capture images, classification outputs, and timestamps for each inspected unit, creating a detailed record of production.

This data supports audit requirements and enables more effective root cause analysis. When a defect is identified downstream, teams can trace it back to specific conditions on the line, whether related to equipment, materials, or environmental factors.

Over time, these records become a valuable resource for improving process stability and reducing variability.

Failure Modes and Practical Limitations

Despite the benefits, edge-based inspection systems are not without limitations. False positives can disrupt production if reject thresholds are too aggressive, while false negatives can allow defects to pass through undetected. Balancing these outcomes requires careful tuning and ongoing monitoring.

Model drift is another concern. Changes in materials, tooling, or environmental conditions can gradually reduce model accuracy. Without a process for retraining and validation, performance can degrade over time.

Maintenance also becomes part of the equation. Cameras need calibration, lighting needs consistency, and edge devices require updates and monitoring. These are ongoing responsibilities, not one-time setup tasks.

In many cases, sustaining performance is more demanding than achieving it initially.

What Changes as Systems Scale

As deployments expand across multiple lines or facilities, managing edge AI systems becomes more complex. Version control, model updates, and data synchronization need to be handled in a structured way. Hybrid setups (where edge devices handle real-time inference and centralized systems manage training and coordination) are becoming more common.

There is also a gradual move toward combining multiple data sources. Visual inspection is being supplemented with thermal, acoustic, and sensor data to provide a more complete view of production conditions. This multimodal approach allows systems to detect issues that would not be visible through images alone.

What begins as a single inspection station often evolves into a broader quality monitoring system.

Edge AI does not replace existing quality processes. It reshapes how and where those processes happen, bringing inspection closer to the point where defects are introduced and where corrective action can still make a difference.

Discover more from Aree Blog

Subscribe now to keep reading and get access to the full archive.