Something stirred in public developer tools and rumor feeds this week: strings in a Google-related repository, benchmark (Gemini 3 leak) screenshots circulating on social, and a steady drumbeat of Pixel hardware leaks pointing to deeper Gemini integrations.

Taken together they create a plausible picture: Google is testing something new. How close that something is to a public release, and how big a leap it is, remain open questions.

Key takeaways

- Developers found meaningful code changes in Google’s Gemini tooling and related repos, that’s a real signal that internal testing and model rotation are happening.

- Multiple credible outlets are reporting Pixel 10 / Made-by-Google leaks that highlight a “Camera Coach” and conversational photo-editing features powered by Gemini. Those device leaks make deep product integration plausible.

- Benchmark screenshots claiming Gemini 3.0 beats other unreleased models are circulating on social platforms, interesting, but unverified and missing reproducible methodology. Treat as rumor.

- The strongest, actionable implication is product direction: bigger context windows, tighter multimodal device hooks (camera + watch), and more built-in planning/tool use appear to be Google’s focus based on public research threads like Project Astra and observed product leaks.

- Practical next steps: test today’s tools (Gemini codelabs, current multimodal features), map workflows that would benefit from much larger context and planning, and watch the August 20 event for official product announcements.

What Leaked in Gemini 3.0 and Why People Took Notice

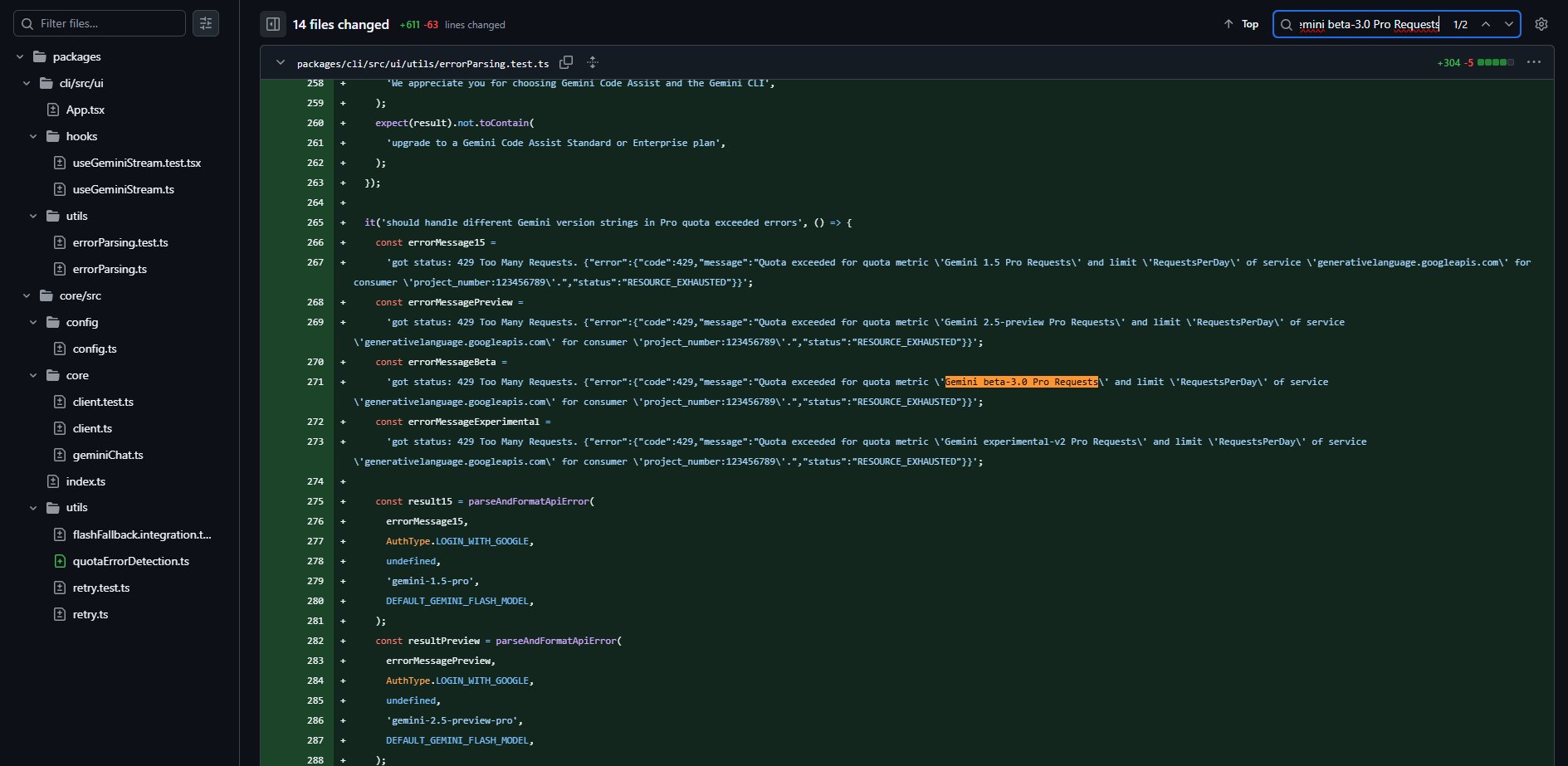

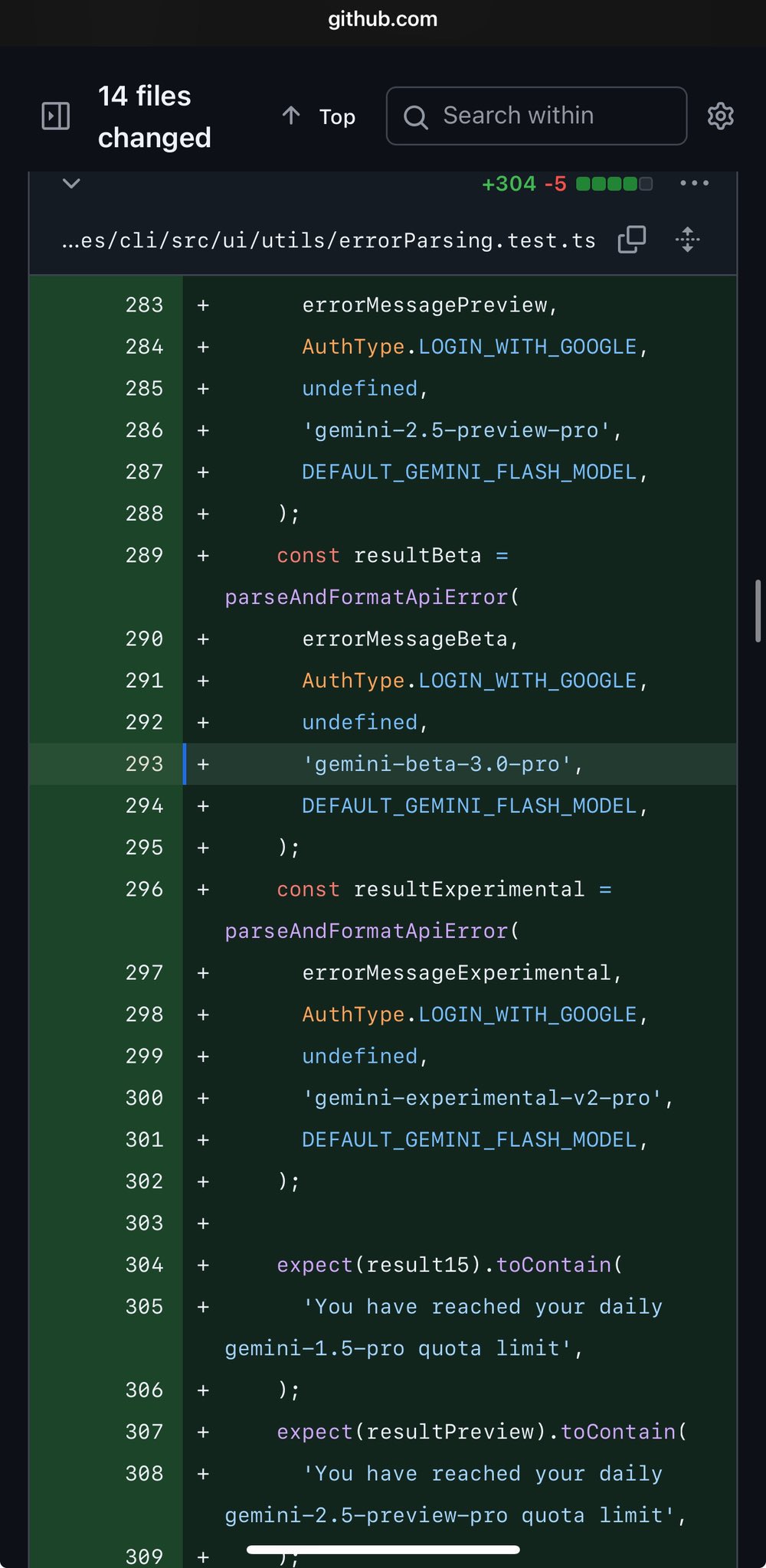

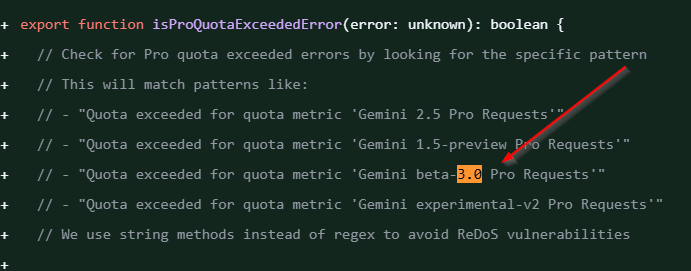

People first spotted model-name strings and related changes in public Google-associated projects, most notably in the Gemini CLI and surrounding repos. When official tooling starts referencing new model aliases (or fallback model names), engineers and reporters treat that as a genuine signal that internal testing and orchestration work is underway.

There’s a recent commit in the google-gemini/gemini-cli repository that touches model fallback and quota handling and references Gemini model variants used in the codebase. That kind of change isn’t proof of a final product, but it is proof that teams are rotating model names and defaults in developer tools.

Separately, independent sites and aggregators have flagged mentions of “gemini-beta-3.0” or similar tags in forks, docs, and issue threads. Those mentions are noisy (different repos and forks use different naming), but they add context to the idea that Google and partners are preparing next-generation model names and tooling hooks. Treat these repo references as meaningful engineering signals, not marketing copy.

Pixel Hardware Leaks: Camera Coach and Device Hooks

Multiple mainstream tech outlets covering the upcoming Made-by-Google event (August 20, 2025) report consistent leaks: the Pixel 10 series will include AI-forward features, with a rumored “Camera Coach” that gives real-time framing/lighting guidance and conversational photo editing powered by Gemini.

The Pixel Watch is also expected to gain deeper Gemini features for style and contextual assistance. These are device-level product clues, even if the underlying model version is unreleased, Google clearly plans to bake Gemini into phone and wearable workflows.

Why that matters: software-first model improvements have limited reach until they’re integrated into devices and apps. A Camera Coach or conversational editor on a flagship phone is a distribution vector that makes advanced multimodal features visible and useful to millions overnight, not just to developers testing APIs.

The Benchmark Story: What’s Real and What Isn’t

Screenshots and social posts claim a leaked benchmark where “Gemini 3.0” scores higher than several competitor models on a metric being shared online as “Humanity’s Last Exam.”

Those screenshots circulate on Reddit and X and have been picked up by rumor blogs. Important: none of these posts link to a reproducible dataset, standard methodology, or an official Google release.

That absence matters. Benchmarks without reproducible context are poor evidence for model superiority.

In short: the numbers are attention-grabbing but unverified.

What the Rumor Cluster Suggests Google is Building

Across leaks, repo signals, and public research threads (notably Project Astra), three capability areas repeatedly appear:

- Bigger context windows: talk of context lengths beyond current public claims, with smarter memory and retrieval. If true, this changes workflows: a model that reliably reasons across dozens of documents or an entire project can produce coherent multi-step deliverables from one session. Project Astra and other Google research efforts have explicitly explored memory and long-term context, making this a plausible direction.

- Built-in planning and verification: instead of toggling special “thinking” modes or using handcrafted chains, the model would plan tasks, check intermediate steps, and self-verify outputs as part of normal operation. This reduces brittle multi-step failures and makes the assistant more reliable for complex workflows.

- Realtime multimodal and on-device actions: live camera understanding, conversational photo editing, and sketch-to-software flows that convert drawings into prototypes or code. Google’s developer codelabs already show early sketch→code experiments with Gemini; the rumored direction is making that flow faster, more reliable, and tightly integrated with devices like phones and watches.

Signal vs. Noise

Strong signal

- Public commits and repo edits in the Gemini tooling ecosystem that reference model aliases and fallback behaviors, real engineering artifacts that show internal model management is active.

- Device leaks showing consistent features (Camera Coach, conversational editing) across multiple reputable outlets, those leaks describe product intent more than model internals, and product intent shapes how models are used.

Mixed / uncertain

- Benchmark screenshots on social platforms, attention-grabbing but missing reproducibility and methodology. Treat as rumor until validated.

- Exact release timing and branding (e.g., whether public will see “Gemini 3.0” and when), many timelines are speculative on Reddit and prediction markets.

What this Could Change for Businesses and Creators

If Google ships any combination of the rumored capabilities, the practical effects are straightforward:

- Content workflows accelerate: A single session could ingest a vault of brand assets and documents, plan a campaign, draft content, and verify links and CTAs, all within a single context. That reduces iteration and handoffs.

- Prototyping democratizes: Better sketch→code means non-technical founders can iterate interfaces faster and hand functional prototypes to developers, shortening product cycles.

- Mobile creativity shifts: Having a camera that coaches or a phone that performs conversational editing means small teams can produce higher-quality visuals and marketing assets without hiring specialists.

Those shifts aren’t automatic, they require companies to rework processes and reuse models as tools inside existing workflows rather than as novelties.

Practical Steps You Can Take

- Try the available tools: Google’s codelabs and current Gemini/Canvas features already support sketch-to-code and multimodal experiments. Start building repeatable SOPs that a future model could scale.

- Map high-value workflows: Identify where larger context, planning, or live camera understanding would save the most time or money, marketing calendars, proposal drafting, UX prototyping, or visual asset creation.

- Prepare for device integration. If your product relies on user-generated media, plan how camera coaching or in-app conversational editing could improve conversion or engagement.

- Follow primary sources. Watch Google’s developer posts, the DeepMind/Project Astra updates, and the official Made-by-Google event for confirmation. Don’t treat single-sourced screenshots as final.

There are meaningful engineering signals that Google is iterating on next-generation Gemini capabilities: repo changes, consistent device leaks, and public research pointing in similar directions. That combination makes a release plausible in the near term.

The splashy benchmark screenshots, however, are unverified and should be read as rumor until independently reproduced.

If you run a business, the smart play is practical: use today’s tools to build workflows that will scale if and when a higher-context, multi-modal assistant arrives. That way you convert rumor risk into a readiness advantage.

Discover more from Aree Blog

Subscribe now to keep reading and get access to the full archive.